Last updated 2002.11.15 Fri.

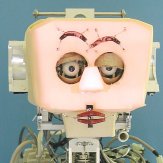

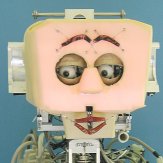

Human-like Head Robot WE-3RV

1. Objective

We have been developing human-like head robots in order to develop a new head mechanisms and functions for a humanoid robot having the ability to communicate naturally with a human by expressing human like emotion. We developed the human-like head robot "WE-3RV" (Waseda Eye No.3 Refined V) which has four sensations and facially expressing emotions in 2001.

2. Hardware Overview

Fig. 1 and Fig. 2 present the hardware overview of WE-3RV. WE-3RV has 28 DOF (Neck: 4, Eyeballs: 4, Eyelids: 6, Eyebrows: 8, Lips: 4, Jaw: 1, Lung: 1) and has a lot of sensors as sensory organs (Visiual, Auditory, Cutaneous and Olfactory sensation) for extrinsic stimuli. WE-3RV can express its emotions using facial expression mechanisms. The followings are descriptions of each part.

|

|

|

Fig. 1 WE-3RV (Whole View)

|

Fig. 2 WE-3RV (Head Part)

|

2.1 Neck

The neck has 4 DOF. Each motional speed is similar to a human's with 160[deg/s]. The yaw axis of the neck is driven by a harmonic drive systems' DC motor, and the other axes are antagonistically driven by a tendon driven mechanism using a DC motor and a spring.

2.2 Eyeballs and Eyelids

The eyeballs have 4 DOF. The maximum angular velocity of eyeballs is similar to a human with 600[deg/s] for the eyeballs.

The eyelids have 6 DOF. WE-3RV can rotate its upper eyelid in order to be able to express using the corner of robot's eye. The maximum angular velocity of opening and shutting eyelids is similar to a human with 900[deg/s] for the eyelids. Furthermore, this robot can blink within 0.3[s], which is as fast as a human does.

2.3 Facial Expression Mechanisms

WE-3RV can express its emotions using the eyebrows, the lips and the facial color. We used springs and stepping motors for the eyebrows and the lips.

We added pale facial color in addition to red facial color into the robot. For two facial colors, we set red and blue EL (Electro Luminescent) sheets in layer. The EL is a thin and light device and doesn't influence the other devices on the skin, such as the FSR (Force Sensitive Resistor) which is used to detect the external forces for the cutaneous sensation. We arranged them on the forehead, around the eyes, the cheeks and the nose of the robot.

2.4 Voice System

We added the voice system into WE-3RV. For the voice system, we used an audio amplifier and a speaker. By connecting flexible tube from the speaker to the robot head, WE-3RV can speak without speaker on the head. The words spoken by WE-3RV don't have specific meaning, because we think they are regarded as sounds that make effects of facial expression stronger. WE-3RV speaks the word according to its emotion.

2.5 Robot Skin

For improving human-friendliness when humans touch the robot, we set soft clear gel under the flesh-colored silicon rubber as the robot skin. WE-3RV's skin has an input function, an output function and a touch feeling.

2.6 Sensors

(1) Visual Sensation

As a visual sensation, we installed black-and-white CCD cameras in both eyes, and used two center of gravity operation PC boards and a brightness calculation PC board to process the image from the CCD cameras. The images of CCD cameras of both eyes are inputted into the center of gravity operation board, so WE-3RV has ability to recognize the target position and to pursue the target. The left eye's CCD camera image is inputted to the brightness calculation board. Therefore, the adjustment to the brightness is achieved.

(2) Auditory Sensation

We used two condenser microphones as the auditory sensation. WE-3RV can localize sound from the loudness and the phase difference between the right and the left.

(3) Cutaneous Sensation

WE-3RV has tactile and temperature sensations in the human cutaneous sensation. We used the FSR (Force Sensing Resistor) as tactile sensation and the CMOS sensor ICs as the temperature sensor.

FSR is able to detect even very weak forces, and is thin and light, etc. And, we devised a method for recognizing not only the magnitude of the force, but also the difference of the touching manner by using a 2 layers structure with FSR.

(4) Olfactory Sensation

We used the 4 semiconductor gas sensors as the olfactory sensation. We set them at the back of the robot connected by flexible air hoses in between the nose and the lung. The lung consists of 4 parallel sets of cylinders and pistons, and a DC motor which drives the pistons. WE-3RV can recognize the smell of alcohol, ammonia and cigarette smoke.

2.7 Total System

Fig. 3 shows the total system configuration of WE-3RV. We used two computers (PC/AT compatible), connected by an Ethernet system.

The first computer, PC1, obtains the visual information by image processors. It determines the robot's mental state by integrating information from the four sense organs.

The other, PC2, controls the DC motors of the eyeballs, the neck, the eyelids, the jaw and the lung, the facial expression, the facial color and the voice system according to the visual and mental information sent from PC1. In addition, PC2 obtains the outputs of the microphones, the semiconductor gas sensors, FSRs and the temperature sensors, and distinguishes the direction of the sound, the kind of smell and touching manner from this information. PC2 then transmits them to PC1.

Fig. 3 System Configuration of WE-3RV

3. Facial Expression

WE-3RV outputs the facial expressions, neck motions and voice based on the emotion. We used the Six Basic Facial Expressions of Ekman in the robot's facial control, and defined the seven facial patterns, "Happiness", "Anger", "Surprise", "Sadness", "Fear", "Disgust" and "Neutral" facial expressions. The strength of each facial expression is variable by a fifty-grade proportional interpolation of differences in location from the neutral facial expression. WE-3RV has the facial patterns shown in Fig. 4. Moreover, we have added the "Drunken" and the "Shame" facial expressions to WE-3RV, shown in Fig. 5, in addition to the seven basic facial expressions.

4. Mental Modeling

WE-3RV has not only the sensing and expressiong functions but also the mental model. WE-3RV changes its mental state and emotion by external stimuli. And, It expresses its emotion using facaial expression and neck motion. In addition, WE-3RV has the robot personality like each human has different personality. The robot personality consists of the Sensing Personality and the Expression Personality. The former determines how a stimulus works in the robot's mental state. The later affects the facial expression and the neck motion. Fig. 6 shows the flow chart of the Robot Control and Personality

Fig. 6 Robot Control and Personality

A 3D mental space, which consists of a pleasantness axis, an activation axis and a certainty axis, is defined in WE-3RV, shown in Fig. 7. The vector M named "Mental Vector" expresses the mental state of WE-3RV. When the robot senses the stimuli from the environment, it changes the Mental Vector M according the Equations of Emotion. Moreover, We mapped out 7 different emotions in the 3D mental space as in Fig. 8. WE-3RV determines the emotion by the Mental Vector passing each region.

Fig. 7 3D Mental Space

Fig. 8 Emotional Mapping