(GTSS)

| At Waseda University, the research in humanoid robots has been focused

in reproducing human-like activities from an engineering point of view.

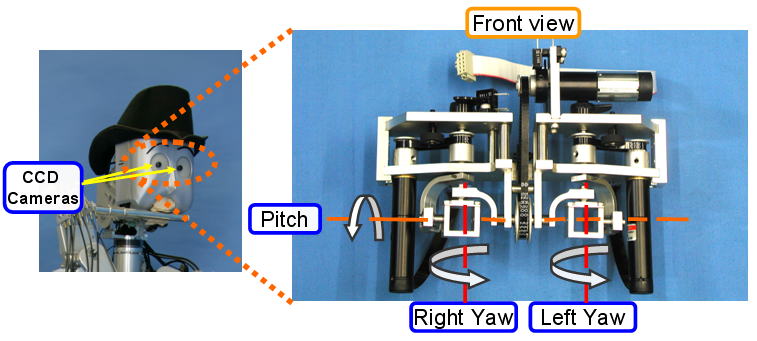

Such robots are equipped with different sensory systems (visual, auditive,

tactile, etc.) in order to interact with humans in different situations.

The way of teaching robots to reproduce such kind of skills have been based

on different methods (Neural Networks, Motion Capture, etc.). These methods

have enable robots to walk, dance, play the flute, show emotions, etc.

The utility of such kind of robots may be extended if they are used for

transferring skills to unskilled people that not only reproduce the skill

learnt previously from an expert, but also evaluate and feedback students

in order to enhance their performance. Therefore, the robot must be able

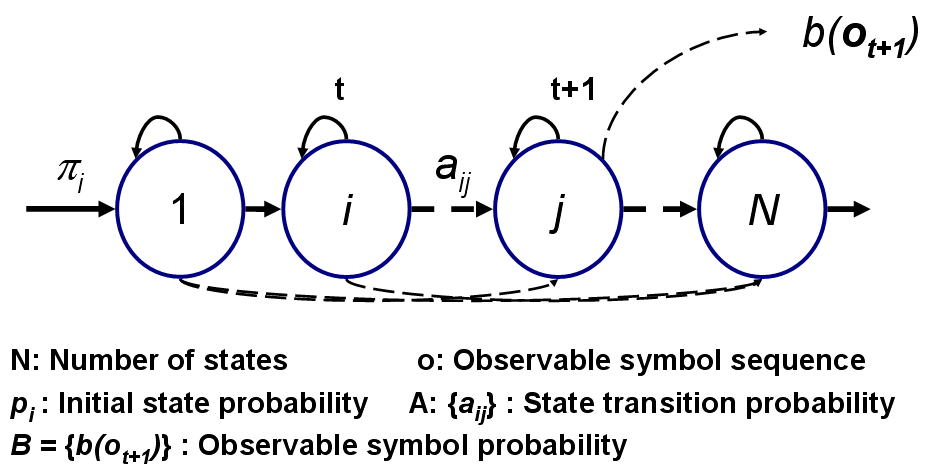

of recognizing undergoing actions (Neuronal Networks, Hidden Markov Models,

etc.), analyzing the human performance trough the design automated procedures

for evaluating the user’s improvements based on quantitative measurement

indexes (task quality, task efficiency, etc.), and finally to feedback

the student trough different perceptual channels (auditive, graphical,

tactile, etc.) that may enhance the student’s performance. As a first approach, we have been developing a musical teaching system using the Waseda Flutist Robot. The research of the Waseda Flutist Robot has been carried out for more that a decade as a mean for understanding the human flute playing mechanism and as an approach for finding useful applications for humanoid robots to interact with humans. Recently, the introduction of the flutist robot as a novel tool for transferring skills from robot to unskilled flutists has been explored. In the 2004, the idea of using the anthropomorphic flutist robot, as a novel teaching tool for improving the performance of beginner flutist player, has been presented. In that time, a set of preliminary experiments were carried out with Japanese students from high schools. In that experiment, two groups were divided: the first one was taught only by a human professor, while in the second one, the flutist robot was added to the learning process. As a result, the students enhanced their performances quicker in the second case. Inspired from those preliminary results, a general transfer skill system (GTSS) was introduced in this year. This proposed system was implemented using the Waseda Flutist Robot No.4 Refined II (WF-4RII). Up to now, this robot has performed musical scores similar as human does. But, by including the GTSS system (figure below), the interaction between humanoid robots and humans can be extended. Basically, the main contributions of the GTSS are the addition of perceptual capabilities to the robot (vision system and recognition system). Furthermore, the idea of implementing a general architecture opens the possibility of using some of the modules to teach different skills and perhaps, to be used by different robots with few modifications. The GTSS is composed by two main modules: internal and external. The internal model is composed by four sub-systems which are used independently of the task to be taught: sensory, recognition, evaluation, and interaction systems. The external module is composed by two subsystems which highly depend on the task to be taught: human skill model and skill evaluation. Inside both subsystems, the experience of a human professor is in someway abstracted. Finally, the connection between the GTSS and the Robotic Control System is done through the TCP/IP. The Robot System communicates the result from the GTSS to the student using an Interaction Interface in order to display the verbal and graphic feedback. |

||

Fig. General Transfer Skill System (from robot to human) |