Fig. 1 WE-4RII (Whole View) |

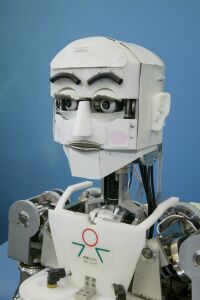

Fig. 2 WE-4RII (Head Part) |

We have been developing the Emotion Expression Humanoid Robots since 1995 in order to develop new mechanisms and functions for a humanoid robot having the ability to communicate naturally with a human by expressing human-like emotion.

In 2003, 9-DOFs Emotion Expression Humanoid Arms were developed to improve the emotional expression. The arms were integrated with WE-4 (Waseda Eye No.4) to develop the Emotion Expression Humanoid Robot WE-4R (Waseda Eyes No.4 Refined) that could express its emotions by using its facial expressions, torso, and arms. In 2004, we have developed the WE-4RII (Waseda Eye No.4 Refined II)by integrating the anthropomorphic robot hand RCH-1 (RoboCasa Hand No. 1) to WE-4R. RCH-1 has 6-DOFs and abilities for emotion expression, grasping and tactile sensing.

Fig. 1 and Fig. 2 present the hardware overview of the Emotion Expression Humanoid Robot WE-4RII. It has 59-DOFs (Hands:12, Arms:18, Waist: 2, Neck: 4, Eyeballs: 3, Eyelids: 6, Eyebrows: 8, Lips: 4, Jaw: 1, Lungs: 1) and a lot of sensors which serve as sense organs (Visual, Auditory, Cutaneous and Olfactory sensation) for extrinsic stimuli. Descriptions of each part are as follows.

The eyeballs have 1-DOF for the pitch axis and 2-DOF for the yaw axis. The maximum angular velocity of eyeballs is similar to a human with 600[deg/s] for the eyeballs. The eyelids have 6-DOF. WE-4RII can rotate its upper eyelid in order to be able to express using the corner of robot's eye. The maximum angular velocity of opening and closing eyelids is similar to a human with 900[deg/s] for the eyelids. Furthermore, this robot can blink within 0.3[s], which is as fast as a human does.

For miniaturization of the head part, we newly developed an Eye Unit that integrated eyeballs parts and eyelids parts. Moreover, in the Eye Unit of WE-4RII, the eyeball pitch axis motion mechanically synchronizes opening and closing upper eyelid motion. Therefore, we can control coordinated eyeball-eyelids motion by hardware.

WE-4RII's neck has 4-DOF, which are the upper pitch, the lower pitch, the roll and the yaw axis. WE-4RII can stretch and pull its neck using the upper and lower DOF like a human. The maximum angular velocity of each axis is similar to a human's at 160[deg/s].

WE-4RII has 9-DOFs Emotion Expression Humanoid Arms. The arm consists of a base shoulder part (pitch and yaw axis), a shoulder part (pitch, yaw and roll axis), an elbow part (pitch axis), and a wrist part (pitch, yaw and roll axis). By using the 2-DOFs of the base shoulder, it can move the whole shoulder up and down, back and forth. This enables WE-4RII to do such movements as squaring its shoulders when angry or shrugging its shoulders when sad. Therefore, WE-4RII can express its emotions effectively by using its arms.

As for a hand part, development was performed at the joint laboratory for reserch on humanoid & personal robotics ROBOCASA. Anthropomorphic robot hand RCH-1(RoboCasa Hand No.1) was designed and developed for WE-4RII at SSSA (Scuola Superiore Sant' Anna) ARTS Lab in Italy.

For driving the finger, tendon drive mechanism is used as Shown in Fig. 6. A wire runs from the fingertip to the root of the mechanism taking one turn around each pulley. When the actuator pulls the wire, the finger automatically grasp the object as shown Fig. 7, because pulley can rotate freely each other. This mechanism enables to conform to objects of any shape softly and gently without using complicated control.

In order to grasp the spherical object, humans abduct the thumb opposite to the small finger, and in case of the cylinderical grasp, we adduct the thumb opposite to the middle finger. This abduction and adduction mechanism make it possible to grasp the several shape object effectively.

RCH-1 has three tactile sensors; distributed on/off contact sensor, FSR, 3D force sensor. Distributed on/off contact sensor is the switch consisted of thin sheets. It is arranged at 16 places; 15 parts for inside of the finger, 1 part for palm shown in Fig. 8. FSR is the same one used for the head part of WE-4. By using two layerd sheet, we can recognize not only the magnitude of the force, but also the difference of the touching manner that are "Push", "Stroke", "Hit".and 3D force sensor which can measure the fingertip force is implemented in the fingertips of the thumb and index finger.

WE-4RII has 2-DOF waist composed by pitch and yaw axes. By using the waist motion, WE-4RII can product emotional expression with not only neck but also the upper-half part of its body.

WE-4RII expresses its facial expression using its eyebrows, lips, jaw, facial color and voice. The eyebrows consist of flexible sponges, and each eyebrow has 4-DOF.

We used spindle-shaped springs for WE-4RII's lips. The lips change their shape by pulling from 4 directions, and WE-4RII's jaw that has 1-DOF opens and closes the lips.

For facial color, we used red and blue EL (Electro Luminescence) sheets. We applied them on the cheeks. WE-4RII can express red and pale facial colors.

For the voice system, we used a small speaker that was set in the jaw. The robot voice is a synthetic voice made by LaLaVoice 2001 (TOSHIBA Corporation).

WE-4RII has two color CCD cameras in its eyes. The images from its eyes are captured to a PC by an image capture board. WE-4RII can recognize any color as the targets and it can recognize eight targets at the same time. After calculating the gravity and area of the targets , WE-4RII can follow them with the eye, the neck and the waist. This makes it possible to follow the target with any collor in the three dimension space.

We used two small condenser microphones as the auditory sensation. WE-4RII can localize the sound directions from the loudness between the right and the left.

WE-4RII has tactile and temperature sensations in the human cutaneous sensation. We used the FSR (Force Sensing Resistor) as tactile sensation FSR is able to detect even very weak forces, and is a thin and light device. We devised a method for recognizing not only the magnitude of the force, but also the difference of the touching manner that are "Push", "Stroke", "Hit", by using a 2 layers structure with FSR. On the other hand, WE-4RII has a Thermistor the temperature sensor. FSRs are also installed on the palms to detect whether it has been contacted or not.

We used the four semiconductor gas sensors as the olfactory sensation. We set them in WE-4RII's nose. WE-4RII can recognize the smells of alcohol, ammonia and cigarette smoke.

Fig. 12 shows the total system configuration of WE-4RII. We use three personal computers (PC/AT compatible) connected to each other by Ethernet.

PC1 (Pentium 4, 2.66[GHz], OS: Windows XP) obtains and analyzes the output signals from the olfactory and cutaneous sensor by using 12 bits A/D aquisition board. Andmore, analizes the sounds from microfones by soundboard. We determine the mental state according to these information of stimuli, sensing data of the hands from the PC2, and the visual images from the PC3. Moreover, controls all DC motors except the hands and sends the data to PC2 for controling the hands at the sametime. PC2 (Pentium III, 1.0[GHz], OS: Windows 2000) obtains and analyzes the out put signals from the RCH-1's sensing data by 12 bits A/D aquisition board and digital I/O board. Analized data is sent to the PC1 and controls the finger position of the RCH-1 based on the data sent by PC1. PC3 (Pentium 4, 3.0[GHz], OS: Windows XP) captures the visual images from the CCD cameras and then caluculates the center of gravity and brightness of the target, and sends them to PC1.

We use the Six Basic Facial Expressions of Ekman in the robot's facial control, and have defined the seven facial patterns of "Happiness", "Anger", "Disgust", "Fear", "Sadness", "Surprise", and "Neutral" emotional expressions. The strength of each emotional expression is variable by a fifty-grade proportional interpolation of the differences in location from the "Neutral" emotional expression. The speed of the arm movement is changed according to the emotion of the robot. Therefore, the emotion of the robot can be expressed by both the posture and the speed of the arms. WE-4RII has the emotional expression patterns shown in Fig. 13.

The Mental Dynamics, which is the mental transition caused by the internal and external environment of the robot, is extremely important in the emotional expression. Therefore, in construction of the mental model, we considered that the human brain model had a three-layered model that consisted of the reflex, emotion and intelligence. And, we are approaching the mental model from the reflex. Also, we divided the emotion into "Learning System", "Mood" and "Dynamic Response" according to the working duration.

Moreover, in order to realize bilateral interaction between human and robot, we based our research on "A. H. Maslow's Hierachy of Needs", and introduced the Need Model consisting of the "Appetite", the "Need for Security", and the "Need for Exploration". Consquently, the robot can behave according to its need.

WE-4RII changes its mental state according to the external and internal stimuli, and expresses its emotion using facial expressions, facial color and body movement. We introduced an information flow into the robot shown in Fig. 15. There are two big flows. The one is the flow caused from the external environment. And, the other is the flow caused from the robot internal state. Furthermore, we introduced the Robot Personality because each human has deferent personality. The Robot Personality consists of the Sensing Personality and the Expression Personality. The need and the emotion are a two-layered structure, and the need is in a lower layer than the emotion because we thought that the need was nearer to the instinct than the emotion. Furthermore, the need and emotion affect each other through the Sensing Personality.

The Robot Personality consists of the Sensing Personality and the Expression Personality. The former determines how a stimulus works the mental state. And, the later determines how the robot expresses its emotion. We can easily assign these personalities. Therefore, it's possible to easily obtain a wide variety of the Robot Personalities. Moreover, we introduced the "Learning System" in order for the robot to learn the experiences and construct its personality based on its experiences dynamically.

We adopted the 3D mental space, which consists of a pleasantness axis, an activation axis and a certainty axis, shown in Fig. 16. The vector E named the "Emotion Vector" expresses the mental state of WE-4. Furthermore, we newly introduce the "Mood Vector" M that consists of a pleasantness axis and an activation axis.

The pleasantness component of the Mood Vector changes by the current mental state. But, in order to describe the activation component of the Mood Vector, we introduced the internal clock that is a kind of automatic nerve system.

The Emotion Vector E is described the Equations of Emotion if the robot senses the stimuli. We considered that the mental dynamics which is a transition of a human mental state might be expressed by similar equations to the equation of motion. Therefore, we expanded the equations of emotion into the second order differential equation which modeled on the equation of motion. The robot can express the transient state of the mental state after the robot senses the stimuli from the environment. We can obtain the complex and various mental trajectories.

Finally, we mapped out 7 different emotions in the 3D mental space as in Fig. 17. WE-4 determines the emotion by the Mental Vector passing each region.

Bilateral interaction is important for natural communication between human and robot. We considered that active behavior of robot was necessary to realize bilateral interaction. Therefore, we introduced the Need Model to the robot mental model. The need state of a robot is described by the matrix N named the "Need Matrix". The "Need Matrix" is described as a first order difference equation. Though the robot need consists of the "Appetite", the "Need for Security" and the "Need for Exploration" in this study, the need matrix is expandable depending on the number of need factors.

The appetite is based on the total consumed energy that is described as the sum of the basal metabolism energy and output energy. We considered that metabolism energy was determined by the robot's emotional state, and the output energy of the robot was determined by internal or external stimuli such as the total electric current.

The need for security is a type of the defense behavior. The defense reflex of withdrawal from strong stimuli is the similar reaction.However, the need for security generates the defense behavior for long-term stimuli. When a robot senses dangerous stimuli from the environment for a long period, the robot can withdraw from the dangerous stimuli or express a defense behavior even if the stimuli are too weak to cause the defense reflex. We realized the Need for Security by learning the position and strength of the stimuli when a robot felt stimuli from the environment.

When humans and animals encounter a new situation or a new object, they express exploratory behavior out of their curiosity because the need for exploration is high. We realized the need for exploration by learning of the relation between the visual information and target property.

The robot can actively generate and express its behavior based on its need in order to satisfy its need. And, the robot with need continues to exhibit the same behavior until the robot satisfies its need as a result of active behavior. We also considered that the need was one of the internal stimuli to the robot. By assigning the Sensing Personality for the need, the need affect the mental state.

Human memory has the relations of the mood state-dependency and the mood congruency with their mood. Humans easily retrieve the same memory in the same mood where the memory was stored. This is the mood state-dependency. On the other hand, the mood helps retrieving a memory corresponding to the same mood, known as the mood congruency. Basically, humans tend to retrieve pleasant memories if they are pleasant and conversely, unpleasant memories if they are unpleasant. Moreover, human performance is related to their activation level. If an activation level becomes too high or too low, active human performance becomes impossible. The best human performance comes at a medium activation level. We developed an encoding model by using the self-organizing map and a retrieval model by using the chatic neural networks. The robot can recognize a stimulus according to the mood, the activation level and the appetite.

Click the following pictures to see the demonstration videos.

|

Emotion Facial Expressions The robot expresses 7 basic emotions using facial expression. |

Emotion Expression by Upper-half Body The robot expresses emotions using facial expression, body, arms, and hands motion. |

|

4 Sensations The robot reacts to stimuli. |

Various Behaviers The robot can show human-like motions. For example, it can do exercises with a dumbbell. |

|

Concsiousness The robot reacts to the stimulus with the highest consciousness. |

Part of this reserch was conducted at the Humanoid Robotics Institute (HRI), Waseda University. We would like to thank Italian Ministry of Foreign Affairs General Direction for Cultural Promotion and Cooporation, for its support to the establishment of the ROBOCASA laboratory and for the realization of the two artificial hands. And part of this was supported by a Grant-in-Aid for the WABOT-HOUSE Project by Gifu Prefecture. Finally, We would like to express thanks to ARTS Lab, NTT Docomo, SolidWorks Corp., Advanced Reserch Institute for Science and Engineering of Waseda University, Prof. Hiroshi Kimura for this supports to our reserch.

Last Update: 2006-11-01