| ENGLISH/JAPANESE |

|

|

|||||

|

|

||||||

|

|

|

|

||

|

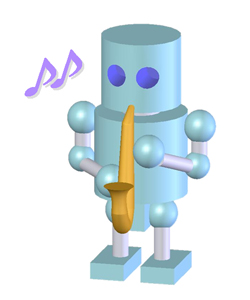

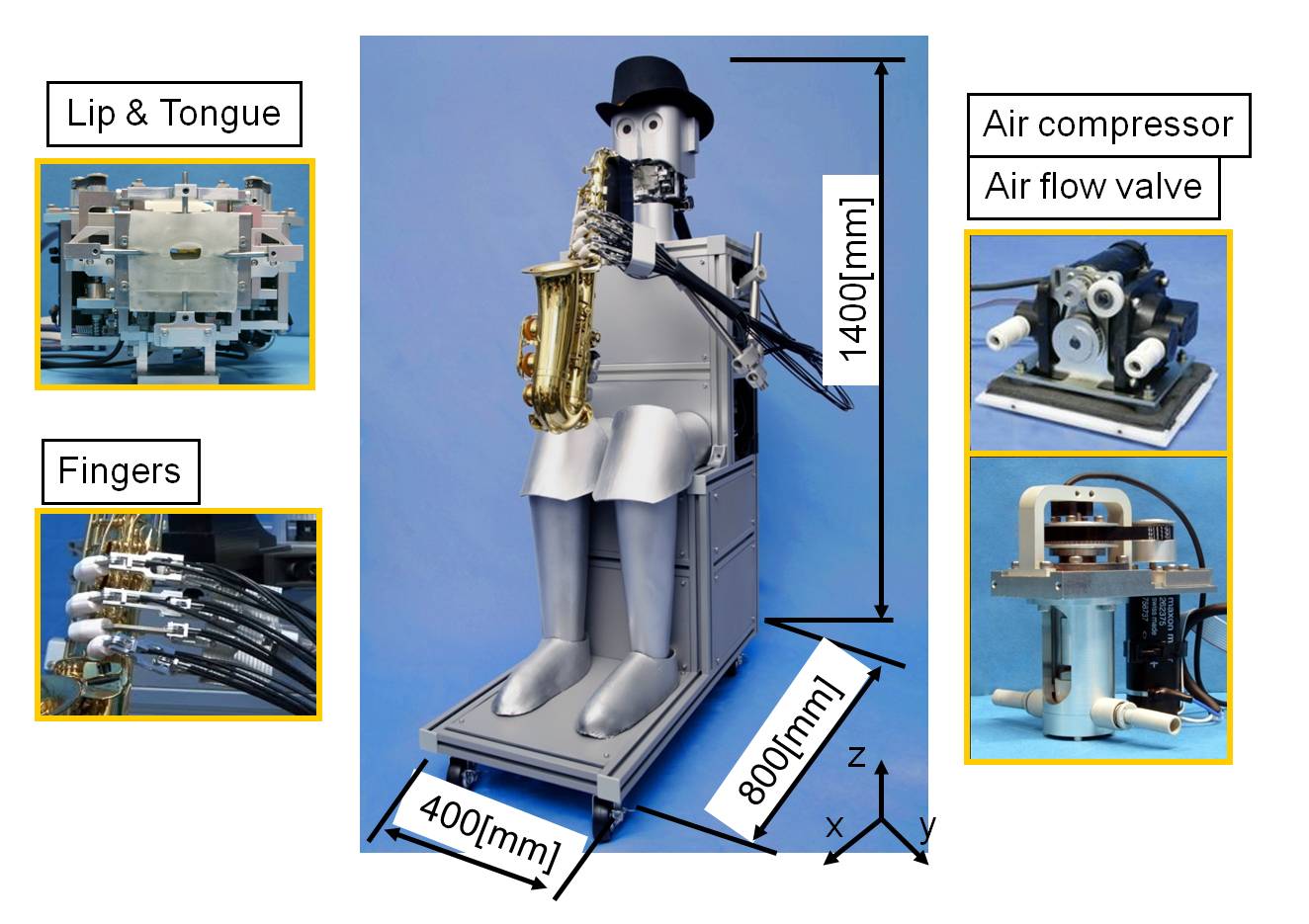

We have proposed; as a long-term goal, the development of musical performance robots designed to be able of interacting with musical partners (both human players as well as musical performance robots) at the emotional level of perception. For this purpose, we are focusing our research in enhancing the perceptual capabilities of the anthropomorphic flutist robot as well as developing a new musical performance robot. In this research, we are focusing on developing a new musical performance robot which in the future will be able of interacting with the anthropomorphic flutist robot. For this purpose, we have decided to develop a musical performance robot which is able of performing other kind of wind instrument. Wind instruments fall into one of the following categories: brass instruments and woodwind instruments. One important difference between woodwind and brass instruments is that woodwind instruments are no-directional. This means that the sound produced propagates in all directions with approximately equal volume. Brass instruments, on the other hand, are highly directional, with most of the sound produced traveling straight outward from the bell. Thus, the wood instruments represent a higher challenge to reproduce the human motor control from an engineering point of view. Basically, there are three types of wood instruments: single reed (i.e. clarinet, saxophone), double reed (i.e. oboe, bagpipes, etc.) and the flute. In particular, we have focused our research on developing an anthropomorphic saxophonist robot since 2008. |

|

|

|

|

|

| WAS-1 (2008) (About WAS-1) |

WF-4RV (2010) (About WF-4RV) |

| -TOP- |

|

|

|

|

|

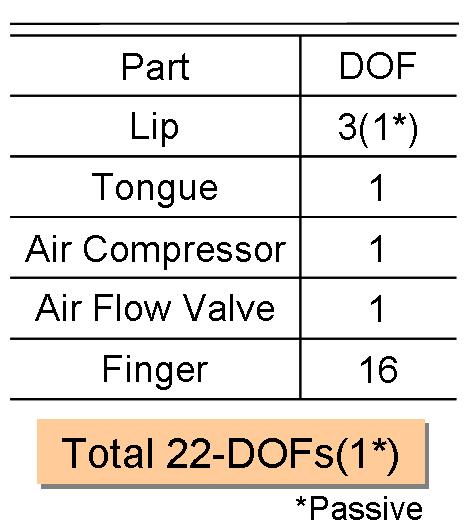

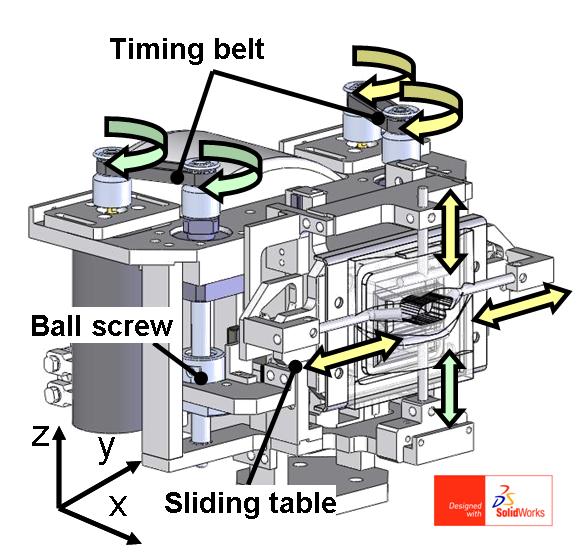

The lip mechanism consists of 2-DOFs designed to control the up/down motion of both lower (pitch changes are controlled) and upper lips (sound pressure changes are controlled). In addition, a passive 1-DOF has been implemented to modify the shape of the side-way lips. |

||

| Mouth(left)・・・・・ Mouth Mechanism(right)・・・・・ |

| -UP- |

|

|

|

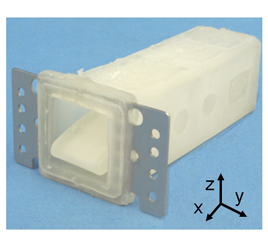

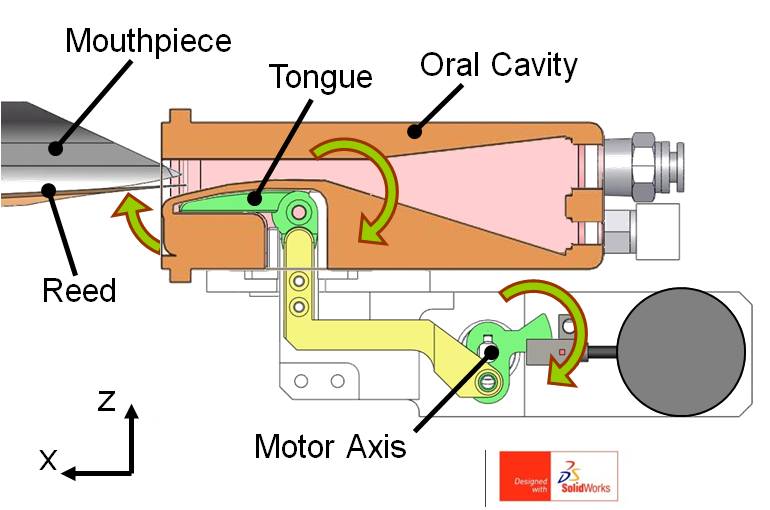

The tongue and oral cavity are made of rubbers. As shown a left figure, the tongue is in contact with the reed of saxophone and the applied torque is transferred from motor to tongue by link mechanism. As a result, robot can control the dynamic properties of the sound such as "attack" and "release". In addition, in order to increase the range of sound, the shape of the oral cavity has been re-designed based on the measurements obtained from ultrasound images taken from professional saxophonist players. |

||

| ・・・・・Oral cavity(left) ・・・・・Tongue mechanism(right) |

| -UP- |

|

|

|

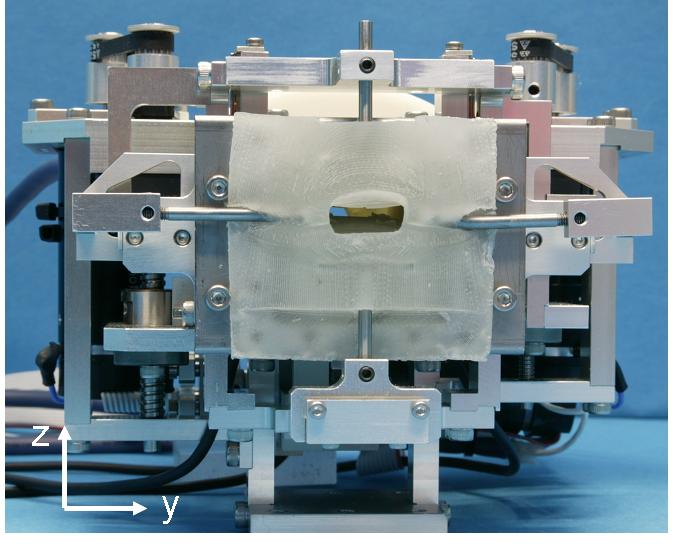

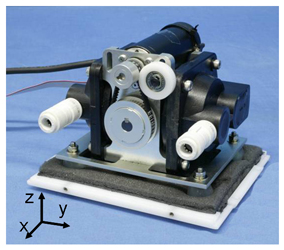

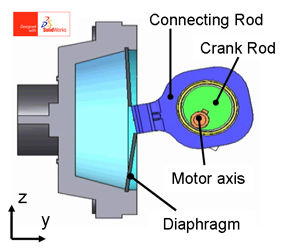

Regarding the air source (lung mechanism), a DC servo motor has been used to control the motion of the diaphragm of the air pump; which is connected to an eccentric crank mechanism. This mechanism has been designed to provide a minimum air flow of 20 L/min and a minimum pressure of 30kPa. Moreover, a DC servo motor has been designed to control the motion of an air valve so that the delivered air by the air pump is effectively rectified. |

||

| Air compressor(left)・・・・・ Air compressor mechanism(right)・・・・・ |

| -UP- |

|

|

|

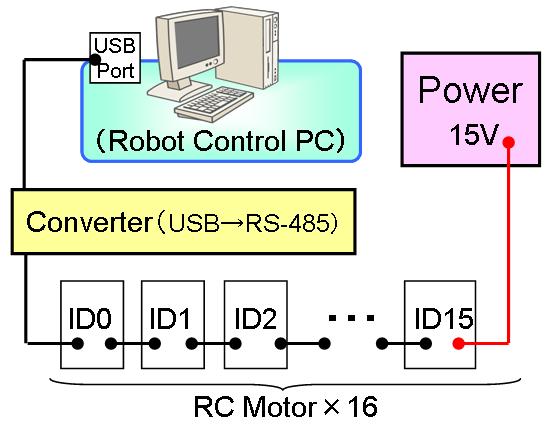

The alto saxophone is possible to play from A#2 to F#5. For this purpose, a human-like hand has been designed, which it is composed by 16-DOFs. In order to reduce the weight on the hand part, the actuation mechanism is composed by a wire and pulley commented to the RC motor axis. In order to control the motion of each single finger, the RS485 communication protocol has been used. |

||

| ・・・・・Fingers(left) ・・・・・Fingers control system(right) |

|

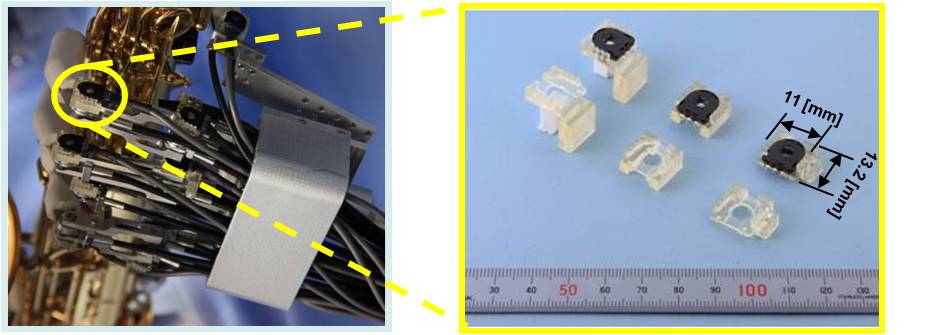

In addition, in order to compensate the delay on the response of each finger mechanism, rotary encoders have been embedded to compensate the dead time. As a result, the response of all fingers can be synchronized during the performance. |

| ・・・・・Fingers embedded sensors (left) ・・・・・Sensor mount(right) |

| -UP- |

| -TOP- |

|

| -TOP- |

|

|

||||||||||||||||

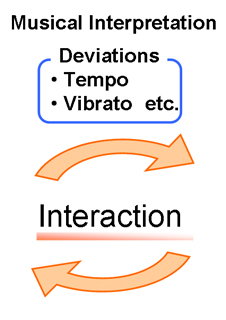

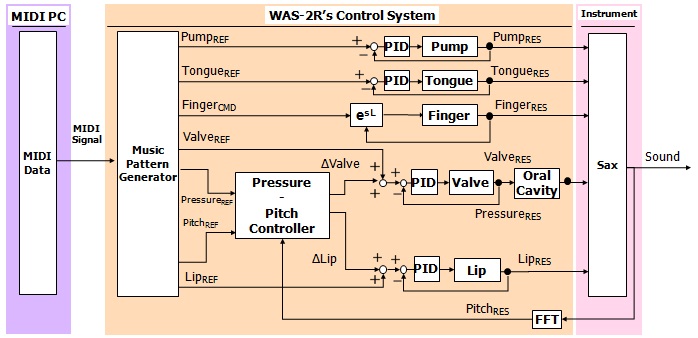

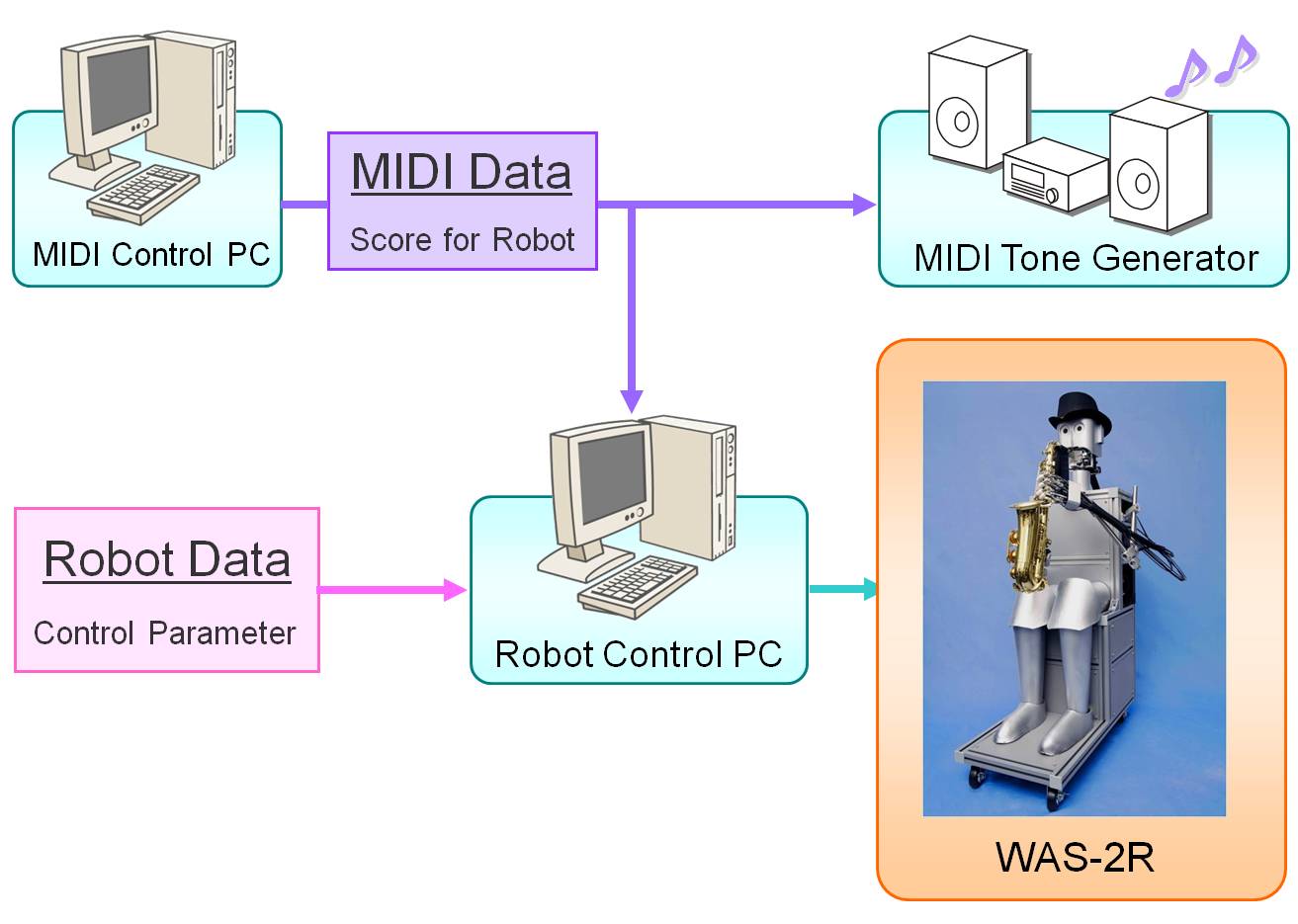

| The MIDI accompaniment system of WAS-2R consists of two computers to control the robot's musical performance: one for controlling the robot, and the other to generate the accompaniment MIDI data. These computers are connected by the MIDI system, and the synchronization of the performance is achieved by using the MIDI signal. | ||||||||||||||||

|

| -TOP- |

|

|

|

Saxophone peformance of WAS-2R(mpeg/1:10/11.5MB) 「What A Wonderful World」 composed by G. Douglass & George David Weiss |

| -TOP- |

| Humanoid Robotics Institute, Waseda University | |

| Toyota Motor Corporation |

|

| SolidWorks Japan K.K. |

| -TOP- |

| Takanishi Laboratory | Last Update 2010.11.30 Copyright(C) 1990-2010 Team Musical Performance Robot /Takanishi Laboratory All Rights Reserved. |